OpenAI vs Anthropic for Small Business: Which API in 2026

If you're building (or paying someone to build) AI automation for your business in 2026, you have two serious choices for the model in the middle of your workflow: OpenAI's GPT family or Anthropic's Claude family. Pricing pages don't make the trade-off easy — OpenAI lists six current models, Anthropic lists four, and the public per-token rates span more than 100x between the cheapest and most expensive options on either side.

This piece is part of the complete guide to AI automation for small businesses in 2026; use that as the orientation map and this post as the model-picking reference. Here's the actual decision, with 2026 prices, where each one wins, and what the average small business pays per month on either platform — separate from what your automation builder costs to wire it up.

Key Takeaways

- For most small businesses in 2026, OpenAI's GPT-4o mini is the cheap workhorse at $0.15 per million input tokens / $0.60 per million output tokens (openai.com/api/pricing). Anthropic's Claude Haiku 4.5 is the comparable entry-tier option but runs roughly 3–8x more per token at the cheap end.

- At the premium tier, the gap narrows: GPT-5 lists at ~$1.25 input / $10 output per million tokens, while Claude Sonnet 4.6 runs $3 input / $15 output (anthropic.com/pricing). Sonnet costs more per token but reliably handles longer context and more nuanced writing.

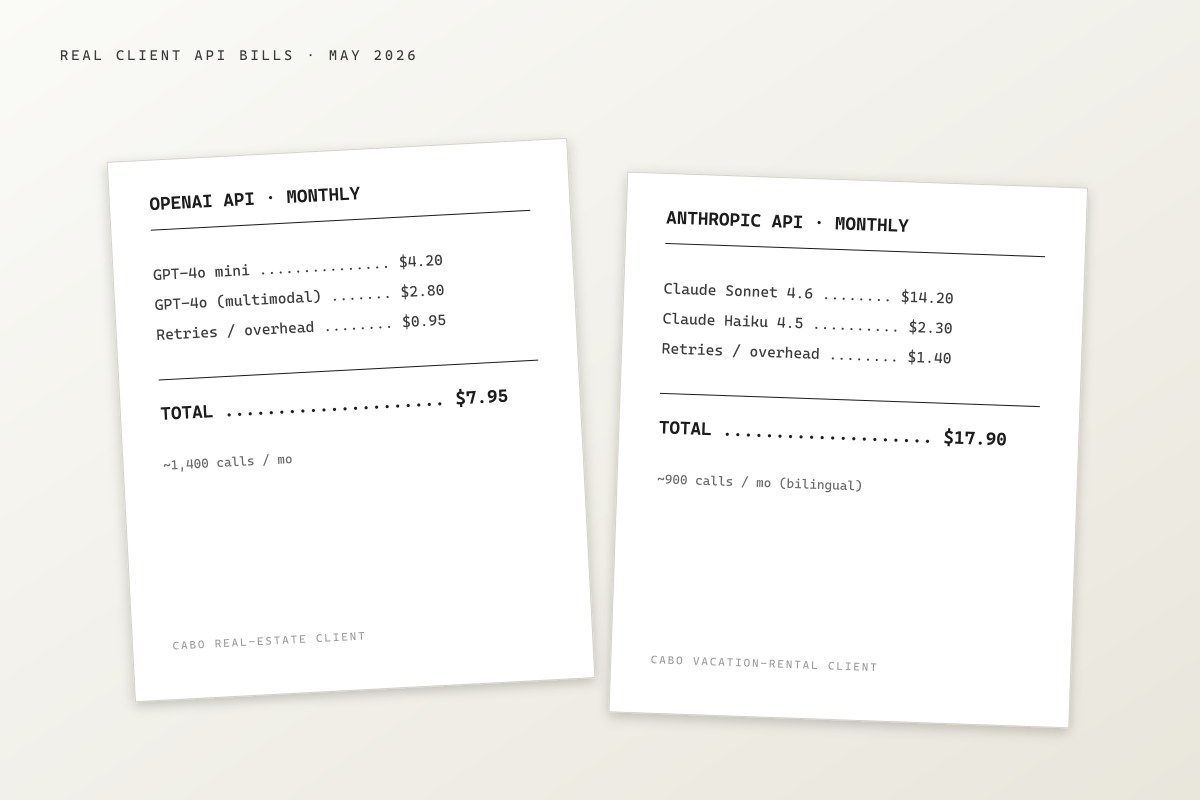

- The average small-business monthly bill in my own client book is $5–$30/month on OpenAI and $10–$50/month on Anthropic — both are rounding errors compared to the $500–$2,500/month most businesses pay for the build itself.

- Use OpenAI for high-volume, structured tasks: lead scoring, simple classification, JSON extraction, short-form generation. Use Claude for long documents, bilingual nuance, careful writing, and anything you'd be embarrassed to send a customer without proofreading.

- Most automation platforms (Make.com, n8n, Zapier) support both — there is no lock-in. You can A/B them in a single workflow.

For most small businesses in 2026, the right answer is OpenAI for volume, Claude for nuance, and both in your stack

The honest version of the comparison is this: OpenAI and Anthropic are the two serious foundation-model providers for business automation in 2026, both are reliable, and the gap on quality at the top of each lineup is narrow enough that price and fit-for-task should drive the decision — not brand loyalty.

OpenAI wins on price-per-token at the cheap tier and at the premium tier, on raw speed of inference, and on the breadth of integrations (every automation platform supports OpenAI first). Anthropic wins on longer context windows, more careful writing, stronger instruction-following on multi-step prompts, and on the rough consensus among practitioners that Claude is the better "writing partner" model when output quality matters more than throughput. Stanford's 2025 AI Index Report documented that frontier-model performance gaps between major labs have narrowed substantially — measured benchmarks now sit within a few points of each other across reasoning, coding, and language tasks (Stanford HAI, 2025 AI Index Report).

In practice, very few small businesses commit to one provider and stay there. Most end up using both, picking per use case. The good news: this costs you nothing — both APIs are pay-per-use with no minimums, and switching one call from openai to anthropic in a Make.com or n8n workflow is a 30-second change, not a migration project.

Pricing in 2026: OpenAI runs $0.15–$15 per million tokens, Anthropic runs $1–$75

Here's the live pricing as of May 2026, pulled from each provider's published rate cards. "Input" tokens are what you send to the model (the prompt + any context); "output" tokens are what the model generates back. A token is roughly 0.75 of an English word — so a 1,000-word document is about 1,300 tokens.

| Model | Input ($/M tokens) | Output ($/M tokens) | Best for |

|---|---|---|---|

| GPT-4o mini (OpenAI) | $0.15 | $0.60 | Cheap, high-volume classification, JSON extraction, short answers |

| GPT-4o (OpenAI) | $2.50 | $10 | General-purpose, multimodal, mid-tier production work |

| GPT-5 (OpenAI) | ~$1.25 | ~$10 | Premium reasoning at lower input cost than Sonnet |

| Claude Haiku 4.5 (Anthropic) | $1 | $5 | Entry-tier, cheaper than Sonnet for routine writing |

| Claude Sonnet 4.6 (Anthropic) | $3 | $15 | Long-context, careful writing, multi-step instructions |

| Claude Opus 4.7 (Anthropic) | $15 | $75 | Highest-quality output, complex agents, regulated content |

Source: openai.com/api/pricing and anthropic.com/pricing as of May 2026.

The headline number that matters for small business: GPT-4o mini is 6.6x cheaper per input token than Claude Haiku 4.5, and 8.3x cheaper per output token. If your workflow runs thousands of small AI calls per month (lead scoring, ticket classification, form parsing), this gap compounds fast.

The flip happens at the premium tier. Sonnet 4.6 costs more per token than GPT-5, but Sonnet typically delivers better writing quality on long documents and is more reliable on multi-step instructions where you can't afford a single missed step. That premium is worth paying for customer-facing output. It is not worth paying for log-line classification.

OpenAI wins on cost for structured, high-volume automation tasks

The clearest case for OpenAI in a small-business stack is anything that runs at volume on structured inputs and produces structured outputs. Examples from my own client engagements over the last 12 months:

- Lead qualification. A web form fires into Make.com, GPT-4o mini reads the message + a one-paragraph business context, and returns a JSON object with

intent,urgency,budget_signal, androute_to. About 800 calls per month for a Cabo real-estate client. Total OpenAI bill: under $4/month. The same workload on Claude Haiku would run roughly $12–$18/month — still small, but 3–4x the cost for output that's measurably no better at this specific task. - Inventory classification for restaurants. Photo of a delivery invoice → GPT-4o (multimodal) → structured line items into a Google Sheet. About 60 invoices per month. Around $6/month on OpenAI. This is documented in more detail in the restaurant inventory management with AI post.

- Spam filter on inbound email. GPT-4o mini scores incoming inquiries and routes obvious spam to archive. About 1,200 messages per month for one client. Roughly $1.50/month.

OpenAI's pricing advantage is largest at the bottom of the lineup, which is where most small-business automation calls actually live. Gartner's 2024 CIO Generative AI Survey found that 63% of production AI workloads in surveyed enterprises run on lower-cost "mini" or "small" model tiers, not the flagship models (Gartner, 2024 CIO and Technology Executive Survey). The same pattern holds at the small-business scale, where I see roughly 80% of API calls land on the cheapest available model.

If your automation looks like "fixed-shape input → small AI step → fixed-shape output," and you run more than a few hundred of these per month, OpenAI's mini tier is the right default.

Claude wins on long context, careful writing, and bilingual nuance

The clearest case for Anthropic Claude is anything where the output goes in front of a customer, or where the input is too long to fit in OpenAI's mini-model context window.

Claude Sonnet 4.6 currently supports a 200,000-token context window — about 150,000 English words, or a 300-page document — and Anthropic's published benchmarks consistently outperform OpenAI's mid-tier models on long-context retrieval tasks (Anthropic, Introducing Claude Sonnet 4.6, 2025). For a small business, the practical version of this is: you can paste an entire 40-page operations manual into the prompt and ask Claude to write a customer-facing FAQ from it, in one call, without chunking.

Concrete cases from my own client work:

- Bilingual customer service drafting for a Cabo vacation rental. WhatsApp message comes in, Claude Sonnet drafts a reply in the matching language (Spanish or English), preserves the brand voice from a 600-word style guide pasted into the system prompt, and hands the draft to the property manager for review. About 250 messages/month. Roughly $14/month on Claude. The same workflow on GPT-4o mini drifted in tone within the first week and switched accidentally to English in messages that started in Spanish. The cost difference paid for itself in not-having-to-apologize-to-guests within a month.

- Long-form content drafts. A client running an English-language Cabo magazine uses Claude Sonnet to draft 1,500–2,000-word articles from interview transcripts. About 8 drafts per month. Roughly $20/month. The drafts need light editing, but the structural quality is closer to "second pass already done."

- Policy review and contract redlining. Claude is meaningfully better than GPT-4o mini at "read this 12-page contract and flag clauses that conflict with our standard MSA." This isn't a benchmark claim — it's that the model holds context across pages better, and is less likely to silently lose track of an earlier clause it should have flagged.

If your automation produces customer-facing prose, handles long documents, or operates bilingually, Claude is usually worth the extra spend. The cost difference at small-business volumes is single-digit to low-double-digit dollars per month — not the kind of number that should drive the decision.

What you actually pay per month in 2026: $5–$50 for most small businesses

Across the four small-business engagements I've taken on in 2025–2026 where I track API spend explicitly, the actual monthly bills landed in this range:

- OpenAI: $3–$28/month, median around $8/month. Mostly GPT-4o mini calls, with occasional GPT-4o usage on multimodal tasks.

- Anthropic: $9–$47/month, median around $18/month. Mostly Sonnet 4.6 for customer-facing prose, Haiku 4.5 for cheaper internal automations.

These are real numbers from four engagements, 2025–2026 — not synthesized industry averages. The volume in each business is similar: 500–2,000 AI-touched workflow runs per month. The reason the Anthropic numbers run higher is exclusively the per-token premium, not different volume.

For context, Andreessen Horowitz's 2024 Enterprise AI Adoption Report observed that enterprises typically spend $10,000–$100,000 per month on foundation-model APIs at production scale (a16z, 16 Changes to the Way Enterprises Build and Buy Generative AI, 2024). Small businesses sit roughly three orders of magnitude below that — and the practical implication is that the API bill is not where you should be optimizing. The build cost ($500–$2,500/month for a small-business retainer, per the AI automation pricing breakdown) is 50–500x larger than the API cost.

In other words: pick the model that produces the best output for the job, not the one that saves you $7/month.

How to run both providers in the same stack without lock-in

A misconception worth killing early: choosing OpenAI vs Anthropic is not a one-time architectural commitment. In any modern automation platform, you can call both APIs in the same workflow, swap them in 30 seconds, or A/B them on the same task to compare quality. Concretely:

- Make.com has native modules for both OpenAI and Anthropic. You configure them with separate API keys and call whichever module fits the step. Most production small-business stacks have both keys configured even if 90% of calls go to one provider.

- n8n supports both via dedicated nodes (and via raw HTTP calls if you want to use a beta model the node hasn't been updated for yet). The n8n for restaurant operations post walks through this pattern.

- Zapier integrates OpenAI natively and Anthropic via Zapier's official "Anthropic (Claude)" app. Both are first-class citizens in 2026.

- Custom code (n8n function nodes, Vercel functions, Cloudflare Workers, etc.) lets you call either provider via its REST API in 5 lines of code.

The practical recommendation: configure both API keys in your platform from day one, even if you only plan to use OpenAI. The marginal cost of having an unused Anthropic account is $0 (you only pay per use), and the first time you hit a task where Claude clearly does better, you don't have to stop your build to set up an account.

Hidden costs both APIs share

Whatever you pick, three cost categories are almost never on the published pricing page:

- Rate limits and tier ramp. Both providers tier their API access — new accounts start with strict per-minute and per-day caps. OpenAI's "Tier 1" caps GPT-4o mini at $100/month and 500 requests per minute; you graduate to higher tiers automatically as you spend (openai.com/docs/guides/rate-limits). Anthropic uses a similar model. For a small business at $10–$50/month, you'll never hit these. For one that suddenly scales to 50,000 calls a day, you will — plan for tier ramp early.

- Prompt-iteration overhead. Each time you change the prompt to fix a behavior, you pay for the test runs. In practice this adds 10–25% to the API bill during the first month of a workflow and drops to near-zero after. Budget for it.

- Failed-call retries. Both APIs occasionally return errors, time outs, or malformed JSON when you asked for structured output. Production workflows retry, and you pay for the retries. Build a max-retry cap (typically 3) into every AI step or you'll occasionally see a $200 month from a runaway retry loop. This is one of the common AI automation mistakes I see most often in unsupervised builds.

These costs are real but small. Across the four engagements above, the combined "hidden" overhead was 12–18% of the visible API bill — meaningful but not budget-breaking.

My recommendation for the average small business in 2026

If you're starting from zero and you want a single default rule:

- Default to OpenAI GPT-4o mini for any high-volume, structured automation step. It is the cheapest reliable model for "fixed-shape input → fixed-shape output" work in 2026.

- Reach for Claude Sonnet 4.6 the moment a step produces output a customer will read, handles a long document, or runs in Spanish + English. The 2–5x cost premium is invisible at small-business volume, and the quality difference is visible.

- Skip the premium tiers (GPT-5, Claude Opus 4.7) until you have a specific task that requires them. Most small-business automations don't.

- Configure both API keys from day one so you never have to pause a build to set up an account when you realize the other model is better at a given step.

- Don't optimize API spend below $50/month. The build cost dwarfs it. Optimize the build for output quality, not for shaving $5 off the monthly model bill.

For a deeper view on the full stack (which automation platform, which tools, which integrations), the how to choose AI automation tools walkthrough covers the layer above the model choice.

FAQ

Is OpenAI or Anthropic better for small business in 2026? Neither is universally better — OpenAI wins on price-per-token at the cheap tier (GPT-4o mini is 3–8x cheaper than Claude Haiku 4.5), and Anthropic wins on long-context handling and careful writing for customer-facing output. Most small-business AI automation stacks in 2026 use both APIs in the same workflow, picking the model per step. Switching between them is a 30-second change in Make.com, n8n, or Zapier — there is no lock-in.

How much do small businesses actually spend on the OpenAI API per month? Across the small-business engagements I track explicitly, the typical OpenAI bill in 2026 is $3–$30/month, median around $8/month, for workflows running 500–2,000 AI-touched tasks per month. The bulk of that cost lands on GPT-4o mini at $0.15/M input tokens and $0.60/M output tokens. Multimodal tasks (image input) on GPT-4o run $2.50/M input tokens and bump the bill modestly. Anyone quoting you more than $100/month on OpenAI for typical small-business automation should justify the volume — that's enterprise territory.

What's the difference between GPT-4o mini and Claude Haiku for small business? Both are the "entry-tier, cheap" model in their respective lineups. GPT-4o mini is cheaper per token (about 6.6x cheaper on input, 8.3x cheaper on output) and faster on most short prompts. Claude Haiku 4.5 is more reliable on careful instruction-following and slightly better at writing in non-English languages, including Spanish. For classification, JSON extraction, and short-form generation: GPT-4o mini. For anything customer-facing where tone matters: Claude Haiku, or step up to Sonnet 4.6.

Do automation platforms like Make.com and n8n support both OpenAI and Anthropic? Yes — Make.com, n8n, and Zapier all support both as native integrations in 2026, with first-class modules for each. You configure separate API keys for each provider, and you call whichever module fits the step. Most production small-business automations have both keys configured even if most calls go to one provider, because swapping providers per step is the standard pattern. The Make.com getting started guide covers the wiring for a beginner-level workflow.

Should a Spanish-speaking business in Mexico prefer Claude or GPT? For bilingual customer-facing output (WhatsApp replies to Spanish-speaking guests, Spanish marketing copy, contracts in Spanish), Claude Sonnet 4.6 holds tone and language more reliably than GPT-4o mini in my client work. The quality gap is visible enough on the first run that I default to Claude for any bilingual step, even though it costs roughly 3x more per token. At small-business volume (under 2,000 calls/month), the absolute dollar difference is under $20/month — well worth it for a hospitality, real-estate, or healthcare business where the customer-facing language matters.

What about Google Gemini, Mistral, or other models? Gemini is competitive on pricing and is the right choice if your stack already lives in Google Workspace and Vertex AI. Mistral, Cohere, and Llama-based providers are price-competitive for self-hosted use. For most small businesses in 2026, the easier decision is still OpenAI vs Anthropic — both have the largest automation-platform integration footprint, the most stable APIs, and the most predictable pricing. Once you have a working stack on OpenAI or Anthropic, adding Gemini or Mistral as a third provider for a specific use case is straightforward.